The power of representation: Adding powers of two

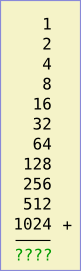

On the left is an addition problem. If you know the answer without thinking, you’re probably a geek.

On the left is an addition problem. If you know the answer without thinking, you’re probably a geek.

Suppose you had to solve a large number of problems of this type; adding consecutive powers of 2 starting from 1. If you did enough of them you might guess that 1 + 2 + 4 + … + 2n – 1 is always equal to 2n – 1. In the example on the left, we’re summing from 20 to 210 and the answer is 211 – 1 = 2047.

And if you cast your mind back to high-school mathematics you might even be able to prove this using induction.

But that’s a lot of work, even supposing you see the pattern and are able to do a proof by induction.

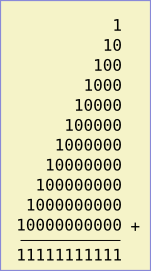

Let’s instead think about the problem in binary (i.e., base 2). In binary, the sum looks like the image on the right.

Let’s instead think about the problem in binary (i.e., base 2). In binary, the sum looks like the image on the right.

There’s really no work to be done here. If you think in binary, you already know the answer to this “problem”. It would be a waste of time to even write the problem down. It’s like asking a regular base-10 human to add up 3 + 30 + 300 + 3000 + 30000, for example. You already know the answer. In a sense there is no problem because your representation is so nicely aligned with the task that the problem seems to vanish.

Why am I telling you all this?

Because, as I’ve emphasized in three other postings, if you choose a good representation, what looks like a problem can simply disappear.

I claim (without proof) that lots of the issues we’re coming up against today as we move to a programmable web, integrated social networks, and as we struggle with data portability, ownership, and control will similarly vanish if we simply start representing information in a different way.

I’m trying to provide some simple examples of how this sort of magic can happen. There’s nothing deep here. In the non-computer world we wouldn’t talk about representation, we’d just say that you need to look at the problem from the right point of view. Once you do that, you see that it’s actually trivial.

You can follow any responses to this entry through the RSS 2.0 feed. Both comments and pings are currently closed.

February 13th, 2008 at 5:19 pm

Thanks for my “a-ha” moment of the day :)

February 13th, 2008 at 6:19 pm

Thanks for my “a-ha” moment of the day :)

July 18th, 2010 at 9:01 pm

Beginning in 2011 and reaching its climax in 2013, the patents for Generic Viagra will expire and generic versions will be found everywhere, but in the meantime the only Viagra versions are coming from countries that do not subscribe to Western patent laws, like China and India. Marketed online as generic Cialis , as long as the active ingredient is sildenafil citrate.

July 19th, 2010 at 3:01 am

Beginning in 2011 and reaching its climax in 2013, the patents for Generic Viagra will expire and generic versions will be found everywhere, but in the meantime the only Viagra versions are coming from countries that do not subscribe to Western patent laws, like China and India. Marketed online as generic Cialis , as long as the active ingredient is sildenafil citrate.

February 2nd, 2014 at 12:11 am

My Mersenne! It’s full of 1’s in binary!